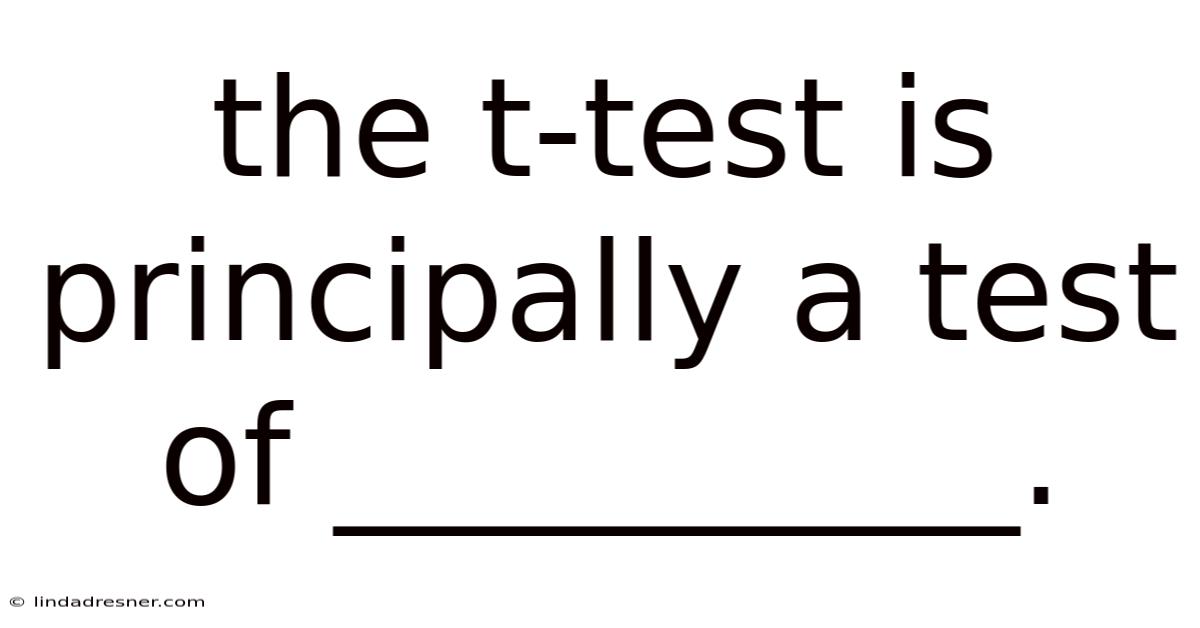

When students and researchers first encounter statistical analysis, one question consistently surfaces: the t-test is principally a test of what exactly? The short answer is that it is a test of the difference between means, specifically designed to determine whether the observed gap between two groups is statistically significant or merely the result of random chance. Worth adding: whether you are comparing the effectiveness of two teaching methods, evaluating medical treatments, or analyzing consumer behavior, understanding this foundational statistical tool is essential. In this guide, we will break down how the t-test works, why it matters, and how you can apply it confidently in your own research or academic work Most people skip this — try not to. Worth knowing..

Introduction to the T-Test

The t-test stands as one of the most widely used inferential statistics in fields ranging from psychology and biology to economics and education. Which means at its core, it answers a simple yet powerful question: *Are these two groups truly different, or could this difference just be noise? Plus, * Unlike descriptive statistics that merely summarize data, the t-test belongs to the family of hypothesis testing methods. It allows researchers to draw conclusions about larger populations based on smaller, manageable samples.

The beauty of this test lies in its adaptability. In practice, it accounts for sample size and data variability, making it especially reliable when working with smaller datasets where the population standard deviation is unknown. That said, historically developed by William Sealy Gosset under the pseudonym Student in 1908, the test was originally created to monitor the quality of stout beer at the Guinness Brewery. In real terms, by focusing on the difference between sample means, the t-test provides a structured, mathematically sound pathway to evidence-based decision-making. Today, it remains a cornerstone of empirical research across nearly every scientific discipline Small thing, real impact. Worth knowing..

The Scientific Explanation Behind the T-Test

To truly grasp why the t-test is principally a test of mean differences, we must look at the statistical mechanics driving it. The test operates on the t-distribution, a probability curve that closely resembles the normal distribution but features heavier tails. These heavier tails account for the increased uncertainty that comes with smaller sample sizes. When you calculate a t-value, you are essentially measuring the signal-to-noise ratio of your data. The signal represents the actual difference between group means, while the noise reflects the natural variability within each group.

The formula for a basic independent samples t-test captures this relationship: t = (Mean₁ − Mean₂) / Standard Error of the Difference

Several key assumptions must be met for the results to remain valid:

- Independence of observations: Each data point must not influence another. On the flip side, * Normality: The data should approximately follow a normal distribution, especially with smaller samples. That's why * Homogeneity of variance: The spread of scores in both groups should be roughly equal (though Welch’s t-test can adjust for violations). * Continuous or ordinal data: The dependent variable must be measured on an interval or ratio scale.

When these conditions align, the t-test transforms raw numbers into a p-value, which indicates the probability of observing your results if the null hypothesis were true. In real terms, 05 typically signals that the difference is statistically significant, allowing researchers to reject the null hypothesis with confidence. Plus, a p-value below the conventional threshold of 0. Importantly, the t-distribution dynamically adjusts based on degrees of freedom, ensuring that smaller samples are held to a stricter standard of evidence before declaring significance.

Step-by-Step Guide to Conducting a T-Test

Applying the t-test correctly requires a systematic approach. Follow these steps to ensure accuracy and interpretability:

- Define Your Research Question: Clearly state what you are comparing. Take this: Does Group A score higher on a standardized test than Group B?

- Formulate Hypotheses: Establish your null hypothesis (H₀: no difference between means) and alternative hypothesis (H₁: a significant difference exists).

- Check Assumptions: Run preliminary tests for normality (e.g., Shapiro-Wilk) and equal variances (e.g., Levene’s test). Address violations before proceeding.

- Calculate the T-Statistic: Use statistical software or manual computation to derive the t-value based on your sample means, standard deviations, and sample sizes.

- Determine Degrees of Freedom: This value depends on your sample size and dictates which t-distribution curve to reference.

- Find the P-Value: Compare your t-statistic against critical values or let software generate the exact probability.

- Interpret Results: If p < 0.05, conclude that the difference is statistically significant. Always pair statistical findings with effect size measures (like Cohen’s d) to understand practical importance.

- Report Findings Transparently: Include means, standard deviations, t-values, degrees of freedom, p-values, and effect sizes in your final documentation.

Types of T-Tests and When to Use Them

Not all comparisons require the same approach. Selecting the correct variant ensures your analysis remains both accurate and meaningful:

- Independent Samples T-Test: Compares means from two completely separate groups (e.g., treatment vs. Consider this: control, male vs. On top of that, female participants). Still, * Paired Samples T-Test: Evaluates the same subjects under two different conditions or time points (e. Practically speaking, g. , pre-test vs. post-test scores, before-and-after medication measurements). Here's the thing — * One-Sample T-Test: Tests whether a single group’s mean differs from a known or theoretical value (e. Consider this: g. , comparing average student height to the national average, or checking if a manufacturing process meets a target specification).

Each variation adjusts the calculation slightly to match the research design, but they all share the same foundational purpose: evaluating whether observed mean differences exceed what random sampling error would typically produce.

Frequently Asked Questions

What happens if my data violates the normality assumption? The t-test is remarkably reliable to minor deviations from normality, especially with sample sizes above 30 due to the Central Limit Theorem. For heavily skewed data or very small samples, consider non-parametric alternatives like the Mann-Whitney U test or Wilcoxon signed-rank test Easy to understand, harder to ignore. Which is the point..

Is a statistically significant result always practically important? Not necessarily. Statistical significance only tells you that a difference is unlikely due to chance. Always calculate and report effect sizes to determine whether the difference matters in real-world applications. A tiny difference can be statistically significant with a massive sample but practically meaningless.

Can I use a t-test to compare more than two groups? No. When comparing three or more groups, you should use Analysis of Variance (ANOVA) instead. Running multiple t-tests inflates the Type I error rate, increasing the chance of false positives Not complicated — just consistent..

Why does sample size matter so much in a t-test? Larger samples reduce the standard error, making it easier to detect true differences. Even so, this also means that with extremely large samples, even trivial differences can become statistically significant. Context and effect size remain crucial.

Conclusion

At its heart, the t-test is principally a test of the difference between means, but its true value extends far beyond a simple mathematical formula. By understanding its assumptions, selecting the appropriate variant, and interpreting results alongside effect sizes, you can transform raw data into actionable insights. Statistics should never feel like a barrier to discovery; instead, it serves as a bridge between curiosity and evidence. It equips researchers, educators, and analysts with a reliable method to separate meaningful patterns from random variation. As you continue exploring research methods, let the t-test be your first step toward making confident, data-driven conclusions that stand up to scrutiny and advance meaningful understanding in your field.

Extendingthe Toolkit: Practical Enhancements and Emerging Perspectives

Beyond the textbook formula, modern practitioners often augment a t‑test with confidence intervals for the mean difference, which instantly convey the precision of the estimate and sidestep the binary “significant / not significant” mindset. Think about it: when the assumptions of equal variances are questionable, Welch’s adjustment provides a strong alternative that automatically adapts the degrees of freedom, preserving the test’s integrity without sacrificing power. For longitudinal or paired designs, incorporating randomization tests or bootstrap resampling can relax distributional constraints while still delivering an intuitive p‑value that aligns with the observed data structure It's one of those things that adds up. That's the whole idea..

When the research question expands to include covariates — such as treatment effects adjusted for baseline characteristics — ancova or linear regression frameworks become natural extensions of the t‑test, offering flexibility to model complex relationships while retaining interpretability. On top of that, the rise of Bayesian t‑tests introduces a complementary paradigm: rather than relying solely on p‑values, analysts can express the evidence for or against the null hypothesis as a posterior odds ratio, directly quantifying the strength of support for competing theories.

Honestly, this part trips people up more than it should.

Finally, transparent reporting remains essential. Also, clear documentation of sample size calculations, effect‑size estimates, confidence intervals, and any deviations from assumptions not only enhances reproducibility but also empowers peers to assess the practical relevance of findings. By integrating these refinements, researchers can transform a simple hypothesis test into a nuanced, evidence‑rich analysis that bridges statistical rigor with substantive insight And that's really what it comes down to..

Conclusion – Mastering the t‑test therefore entails more than memorizing a formula; it requires a thoughtful blend of methodological awareness, contextual judgment, and transparent communication, ensuring that each inference earned from the data stands on a foundation of both statistical soundness and real‑world relevance Surprisingly effective..